Hard-Capping a bytes.Buffer.

Go has a handy dandy struct called bytes.Buffer which consumes a byte slice and implements the interfaces io.Writer and io.Reader. So, it's simply a slice of memory you can write into or read from using various stream functions. Here's a quick example.

As you can see, I've initialized the Buffer with an 0-length byte slice. That means, the implementation allows growing the internal buffer slice when it would "overflow" on Write(). In the most cases, that is really handy, because you don't need to mind about how big your buffer must be allocated before writing to it.

But in some circumstances, you might not want to grow the slice infinitely. One practical example of this is the implementation below.

This is a code segment of the Docker sandbox implementation of ranna (the service which is also utilized to run the code in this post 😏). The AttachToContainerNonBlocking method takes a writer to record the StdOut and StdErr outputs. When you are using byte.Buffer there, you would end up with the problem that a Docker container could spit out so much StdOut data that you would run out of memory at some point. You just would not have any control over how much the containers output can dump into your application memory.

But wait! You can set a capacity of slices in Go! Take a look at the example below.

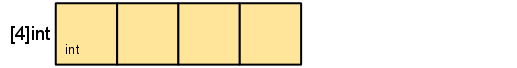

First of all, you need to know that slices are only a fancy abstraction of arrays in Go. A slice wraps an array and has a len as well as a cap. The len specifies the number of elements in the array and the cap defines the actual allocated size of the array on the heap. As you can see, when you add elements to a slice using append, a new slice is returned with a newly allocated internal buffer of the size of 8. Instead, when creating a slice using make, you can also pass a value for cap to set the size of the internal array yourself. That is especially useful if you need to execute append really often on the same array. Without a large cap, go would create a new array on the heap every time the cap would be overflown on append, so set it high before and you have less heap allocations. If you want to rad more about slices and arrays in go, take a look at this post.

But as you can see below, that does not solve our problem. The internal slice will still grow over the specified capacity.

Actually, I think "cap", which is short for "capacity", is a really misleading wording for that function, because it implies that you can actually "cap" the size of a slice. A better word would be size(), but that is just my opinion.But how did I solve that problem? I just wrote a wrapper around bytes.Buffer which holds an actual cap and keeps track of the internal buffer length.

As you can see, CappedBuffer heritages from bytes.Buffer and overrides the Write implementation. Now, on executing Write, the resulting size of the inner buffer when adding the passed byte slice is calculated. If this number exceeds the specified cap, the data is not written to the buffer and a "buffer overflow" error is returned. Otherwise, the byte slice is passed to the Write function of the wrapped buffer.

By the way, this implementation is also publicly available in the public packages of the ranna engine. 😉